Chapter 12: Rational Asymptotics #

...our cab’net’s fractured into factions

Try not to crack under the stress,

we’re breaking down like fractions— Lin-Manuel Miranda (Hamilton)

Note: A SageMath Jupyter notebook corresponding to this chapter can be downloaded here.

A C-finite sequence $(f_n) = f_0,f_1,\dots$ is a sequence that satisfies a linear recurrence $$ \qquad f_n + c_1f_{n-1} + \cdots + c_r f_{n-r} = 0 \qquad (\star) $$ for all $n$ larger than some integer $N \geq r$ where each $c_1,\dots,c_r \in \mathbb{Q}$ with $c_0\neq0.$ We say the integer $r$ is the order of the C-finite recurrence $(\star).$

Note: The solutions to $(\star)$ form a $\mathbb{C}$-vector space (meaning adding any two solutions or multiplying every term in a solution gives another solution). A particular sequence satisfying $(\star)$ is distinguished by its initial conditions $f_0,\dots,f_N.$

Example: The Virahanka-Fibonacci numbers $(f_n)$ satisfy the recurrence $$f_{n+2} = f_{n+1} + f_{n} \qquad (n \geq 0) $$ together with the initial conditions $f_0=f_1=1.$ If desired this recurrence can also be expressed as $$f_{n} - f_{n-1} - f_{n-2} = 0 \qquad (n \geq 2) $$ to match the form of $(\star).$

Rational Generating Functions #

One of the most important properties of C-finite sequences is that they have rational generating functions (and vice-versa).

Theorem 1: The sequence $(f_n)$ satisfies the C-finite recurrence $(\star)$ if and only if its generating function $F(z) = \sum_{n \geq 0} f_nz^n$ is the rational function $F(z) = G(z)/H(z)$ where $$ \begin{aligned} H(z) &= 1 + c_1z + \cdots + c_rz^r \\[+2mm] G(z) &= g_0 + g_1z + \cdots + g_{N-1}z^{N-1} \end{aligned} $$ where $$ g_k = f_k + c_1f_{k-1} + \cdots + c_r f_{k-r} $$ for all $0 \leq k \leq N-1$ (we set $f_k=0$ when $k<0$).

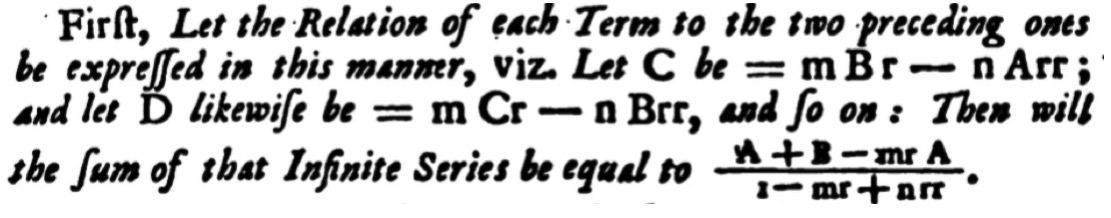

Theorem 1 was essentially established by de Moivre in 1730, in one of the first uses of generating functions.

Proof of Theorem 1

Example: Fix a positive integer $k.$ Our unlabelled constructions can derive that the generating function $W$ for binary strings with no blocks of size larger than $k$ is $$ W(z) = \frac{1-z^{k+1}}{1-2z+z^{k+1}}. $$ Thus, if $w_n$ is the number of such strings then $w_n = 2w_{n-1} - w_{n-k-1}$ for all $n \geq k+1.$

Many combinatorial classes have natural recursive structure that lead to C-finite recurrences, however some surprisingly “complicated” sequences can be C-finite.

Example: Conway’s look and say sequence $$(\ell_n)=1,11,21,1211,\dots$$ starts with $1$ and follows by reading the terms aloud (so $1$ reads as “one $1$” and becomes $11,$ etc.). If $d_n$ denotes the number of (base ten) digits in $\ell_n$ then Conway proved $(d_n)$ has a rational generating function $$ D(z) = \frac{G(z)}{H(z)} = \frac{1+z+\cdots+18z^{77}-12z^{78}}{1-z+\cdots-9z^{71}+6z^{72}}.$$ In fact, there is a rational generating function with denominator $H(z)$ even if the starting term $1$ is replaced by any other natural number except the fixed point $22.$

Corollary: If $(f_n)$ and $(g_n)$ are C-finite then so are $(f_n+g_n)$ and $\left(\sum_{k=0}^n f_kg_{n-k}\right).$

Proof of Corollary

The C-Finite Coefficient Theorem #

The fact that a C-finite sequence has a rational generating function allows us to compute an explicit expression for any sufficiently large term as a finite sum involving powers of algebraic numbers. Before stating this result we need some algebraic preliminaries.

Fact 1 – Fundamental Theorem of Algebra: Any polynomial $P(x) \in \mathbb{Q}[x]$ of degree $d$ can be factored into linear factors $P(x)=C(x-\lambda_1)\cdots(x-\lambda_d)$ with $C$ and the $\lambda_i$ being complex numbers (note that the $\lambda_i$ may repeat).

Grouping together roots that appear multiple times, we can write any polynomial in the form $$P(x) = C(x-\lambda_1)^{d_1} \cdots (x-\lambda_s)^{d_s}$$ where the $\lambda_i$ that appear are distinct. We say that the root $\lambda_i$ of $P$ has multiplicity $d_i$ and call a root of multiplicity 1 a simple root. Working directly from the definition, determining the multiplicity of a root of $P$ requires factoring $P$ over the complex numbers – although this can be done in polynomial time, there is a simpler way to determine multiplicities.

Lemma 1: The multiplicity of a root $\lambda_i$ of $P(x) \in \mathbb{Q}[x]$ is the smallest positive integer $k$ such that $$ P(\lambda_i) = P^\prime(\lambda_i) = \cdots = P^{(k-1)}(\lambda_i) = 0$$ and $P^{(k)}(\lambda_i) \neq 0,$ where $P^{(j)}$ represents the $j$th derivative of $P.$

Proof of Lemma 1

Fact 2 – Partial Fraction Decomposition: Suppose $F(z)=G(z)/H(z)$ with $\deg G < \deg H$ and $$ H(z) = C(z-\lambda_1)^{d_1} \cdots (z-\lambda_s)^{d_s} $$ where the $\lambda_i$ are distinct non-zero complex numbers. Then there exist complex numbers $C_i^{(j)}$ such that $$ F(z) = \sum_{i=1}^s \sum_{j=1}^{d_i} \frac{C_i^{(j)}}{(1-z/\lambda_i)^j}.$$

We are now ready to determine an explicit representation for the terms in a C-finite sequence.

C-Finite Coefficient Theorem: Suppose that $(f_n)$ is a C-finite sequence with rational generating function $$F(z)=R(z) + \frac{G(z)}{H(z)}$$ where $\deg G < \deg H$ and $H(0)=1.$ If $\lambda_1,\dots,\lambda_s$ form the distinct roots of $H$ with multiplicities $d_1,\dots,d_s$ then $$ f_n = p_1(n) \lambda_1^{-n} + \cdots + p_s(n) \lambda_s^{-n}$$ for all $n > \deg R,$ where $p_i(n)$ is a polynomial in $n$ of degree at most $d_i-1.$

Note: The condition that $\deg G < \deg H$ is not restrictive, as if $\deg G \geq \deg H$ then we can divide $G$ by $H$ to get a remainder that adds to $R$ and a new numerator of degree less than $H.$ The condition that $H(0)=1$ is not restrictive as we can assume that $H(0)\neq0$ whenever $F$ has a power series expansion at the origin, and divide $G$ and $H$ by the leading constant of $H$ to make $H(0)=1.$

Proof of C-Finite Coefficient Theorem

Let $n > \deg R$ so that $f_n = [z^n]\frac{G(z)}{H(z)}.$ Expanding this rational function in a partial fraction decomposition gives $$ \frac{G(z)}{H(z)} = \Lambda_1(z) + \cdots \Lambda_s(z)$$ where $$ \Lambda_i(z) = \frac{C_i^{(1)}}{1-z/\lambda_1} + \cdots + \frac{C_i^{(d_i)}}{(1-z/\lambda_i)^{d_j}}$$ for constants $C_i^{(j)} \in \mathbb{C}.$ Furthermore, for any $\lambda \neq 0$ and positive integer $m$ the Negative Binomial Theorem implies $$ \begin{aligned} [z^n] (1-z/\lambda)^{-m} = (-\lambda)^{-n}\binom{-m}{n} &= \lambda^{-n}\binom{m+n-1}{m-1} \\[+5mm] &= \lambda^{-n} \cdot \frac{(n+m-1)\cdots(n+1)}{(m-1)!}, \end{aligned}$$ where the second factor in this expression is a polynomial of degree $m-1$ in $n.$

Thus, $[z^n]\Lambda_i(n) = p_i(n)\lambda_i^{-n}$ for a polynomial $p_i$ of degree at most $d_i-1$ and $$ f_n = [z^n]\Lambda_1(z) + \cdots + [z^n]\Lambda_s(z) = p_1(n) \lambda_1^{-n} + \cdots + p_s(n) \lambda_s^{-n}.$$

Combining Theorem 1 above with the C-Finite Coefficient Theorem immediately gives the following.

Corollary: If $(f_n)$ satisfies $$ \qquad f_n + c_1f_{n-1} + \cdots + c_r f_{n-r} = 0 \qquad (\star) $$ for all $n \geq r$ then $ f_n = p_1(n) \lambda_1^{-n} + \cdots + p_s(n) \lambda_s^{-n}$ for all $n\geq0,$ where the $\lambda_i$ are the roots of the characteristic polynomial $$ H(z) = 1 + c_1z + \cdots + c_rz^r $$ of the recurrence $(\star)$ and $p_i(n)$ is a polynomial in $n$ of degree one less than the multiplicity of $\lambda_i$ as a root of $H.$

Example: If $f_0=f_1=1$ and $f_{n+2} = f_{n+1} + f_n$ for all $n \geq 0$ then a partial fraction decomposition shows $$ F(z) = \frac{1}{1-z-z^2} = \frac{1}{\sqrt{5}}\left(\frac{1/\sigma}{1-z/\sigma}-\frac{1/\tau}{1-z/\tau}\right) $$ where $\sigma = (-1+\sqrt{5})/2$ and $\tau = (-1-\sqrt{5})/2$ are the roots of $1-z-z^2.$ Thus, $$ f_n = \frac{1}{\sigma\sqrt{5}} \sigma^{-n} - \frac{1}{\tau\sqrt{5}}\tau^{-n}.$$

Example: Find a closed form for the sequence $(f_n)$ that satisfies $$ f_{n+3} = 3f_{n+1} - 2f_n $$ for all $n \geq 0$ where $f_0=f_1=4$ and $f_2=13.$

Click for Answer

Since the characteristic polynomial $H(z) = 1-3z^2+2z^3$ has the factorization $H(z) = (1-z)^2(1+2z),$ the C-Finite Coefficient Theorem implies that there exist $A,B,C \in \mathbb{C}$ such that $$ f_n = (An+B) \cdot 1^n \quad+\quad C \cdot (-2)^n. $$ Substituting $n=0,1,2$ into this equation gives the $3 \times 3$ linear system $$ \begin{aligned} &(n=0) && 4 = f_0 = B+C \\[+2mm] &(n=1) && 4 = f_1 = A+B-2C \\[+2mm] &(n=2) && 13 = f_2 = 2A+B+4C \end{aligned} $$ for $A,B,C.$ Solving this system gives $(A,B,C) = (3,3,1)$ so that $$f_n = 3(n+1) + (-2)^n.$$

Effective Algebraic Tools #

Although the quadratic formula gives an expression for the roots of degree 2 polynomials “in radicals,” such explicit representations do not hold in general for the roots of polynomials in degree 5 or larger. Thus, one must often work with the roots of a high-degree polynomial $H$ implicitly, for instance specifying regions of the complex plane that each contain precisely one root of $H.$

Many effective tools developed over centuries of work in commutative algebra are useful for this purpose, including

-

the resultant, which gives an effective test to determine if two polynomials share a root over the complex numbers,

-

Descartes’ rule of signs, Sturm sequences, and Rouché’s theorem for counting the number of roots in subsets of the real and complex numbers,

-

Newton Iteration and Alpha Theory for computing numeric approximations to roots and isolating roots in regions of the complex numbers,

-

Gröbner bases, regular chains, and rational univariate representations for solving systems of polynomial equations.

In these notes we focus on examples where such advanced tools are not needed, however they are implemented in most computer algebra systems and are a fundamental tool when dealing with algebraic problems.

Asymptotics of C-Finite Sequences #

Although the C-Finite Coefficient Theorem gives an explicit expression for the terms in a C-finite sequence, it can be laborious to compute the coefficients in all coefficient polynomials $p_k(n).$ Fortunately, if we only need to determine asymptotic behaviour then we can usually save a lot of work.

First, we derive an explicit formula for $p_k(n)$ when $\lambda_k$ is a simple root, in which case $p_k$ is a constant with respect to $n.$

Lemma 2: Assume the hypotheses of the C-Finite Coefficient Theorem. If $\lambda_k$ is a simple root of $H(z)$ and $G(\lambda_k) \neq 0$ then the polynomial $p_k$ in the explicit formula for $f_n$ satisfies $$p_k(n) = \frac{-G(\lambda_k)}{\lambda_kH^\prime(\lambda_k)}.$$

Proof of Lemma 2

In general the coefficients of $p_k(n)$ can be complicated, however its leading term is always given by a relatively simple explicit expression.

Lemma 3: Assume the hypotheses of the C-Finite Coefficient Theorem. If $\lambda_k$ is a root of multiplicity $m$ of $H(z)$ and $G(\lambda_k) \neq 0$ then the polynomial $p_k$ in the explicit formula for $f_n$ satisfies $$p_k(n) = \frac{m (-1)^m G(\lambda_k)}{\lambda_k^m H^{(m)}(\lambda_k)} \; n^{m-1} + O(n^{m-2}), $$ where $H^{(m)}$ denotes the $m$th derivative of $H.$

Proof of Lemma 3

Each root $\lambda_k$ of the denominator $H(z)$ gives a “contribution” of $p_k(n)\lambda_k^{-n}$ to the explicit formula for $f_n.$ To find asymptotics of $f_n,$ it is sufficient to determine which of these contributions grows the fastest.

Recall that the modulus of a complex number $z=a+ib$ with $a,b \in \mathbb{R}$ is the non-negative real number $|z| = \sqrt{a^2+b^2}.$ If $f_n$ is any sequence of complex numbers and $g_n$ is any sequence of real numbers that is eventually positive, then we write

$\quad\bullet$ $f_n = O(g_n)$ if $|f_n| = O(g_n),$ and

$\quad\bullet$ $f_n = o(g_n)$ if $|f_n| = o(g_n).$

One key property of the modulus is that $|z^n| = |z|^n$ so if $|z| < w$ then $|z^n| = o(w^n).$

Assume that $(f_n)$ is a C-finite sequence with rational generating function $G(z)/H(z)$ where $G$ and $H$ are coprime, so $G$ does not vanish at any root of $H.$ In the expression $$ f_n = p_1(n) \lambda_1^{-n} + \cdots + p_s(n) \lambda_s^{-n}$$ from the C-Finite Coefficient Theorem, only the terms with $|\lambda_k|$ minimal will have the fastest asymptotic growth (with the other terms growing exponentially slower). Furthermore, among the terms with $|\lambda_k|$ minimal only those with maximal multiplicity will have the fastest asymptotic growth (with the other terms growing like a smaller power of $n$).

When there is a single such root, we can be explicit about asymptotic behaviour.

Corollary: Assume the hypotheses of the C-Finite Coefficient Theorem and that the polynomials $G$ and $H$ are coprime. If $|\lambda_1| < |\lambda_k|$ for all $k=2,\dots,s$ then $$ f_n = p_1(n)\lambda_1^{-n} + O(w^{-n}n^{\alpha-1}) $$ where $w = \displaystyle\min_{2 \leq k \leq s} |\lambda_k|>|\lambda_1|$ and $\alpha$ is the largest multiplicity of the roots of $H$ with modulus $w.$

Corollary: Assume the hypotheses of the C-Finite Coefficient Theorem and that the polynomials $G$ and $H$ are coprime. If $|\lambda_1| \leq |\lambda_k|$ for all $k=2,\dots,s$ and $\lambda_1$ has higher multiplicity $m$ than all other roots $\lambda_k$ of $H$ with $|\lambda_1|=|\lambda_k|$ then $$ f_n = \frac{m (-1)^m G(\lambda_1)}{\lambda_1^m H^{(m)}(\lambda_1)} \; n^{m-1} \; \lambda_1^{-n} + O(n^{m-2}). $$

Note that neither of these Corollaries are strictly stronger than the other: the first requires a unique root of minimal modulus but gives asymptotic behaviour up to exponential error, while the second relaxes this assumption but gives asymptotics only up to polynomial error.

Example: The class of integer partitions with parts of size at most 3 has rational generating function $$F(z) = \frac{1}{(1-z)(1-z^2)(1-z^3)}.$$ Find the asymptotic behaviour of the number $p_n$ of such partitions of size $n$ as $n\rightarrow\infty.$

Click for Answer

Since the denominator $H$ of $F$ factors as $H(z)=(1-z)^3(1+z)(1+z+z^2),$ we see that $H$ has a root of multiplicity $3$ at $z=1$ and all other roots have multiplicity $1$ (although all roots have modulus $1$). A direct computation shows that $H^{(3)}(1) = -36,$ so that $$ p_n = \frac{-3}{-36} n^2 + O(n) \sim \frac{n^2}{12}.$$

The following result is extremely useful for finding the roots of $H(z)$ with minimal modulus.

Vivanti-Pringsheim Theorem (Rational Version): Suppose that $F(z) = \frac{G(z)}{H(z)}$ for coprime polynomials $G$ and $H$ and that the power series expansion of $F$ at the origin only has a finite number of negative coefficients. Then one of the roots of $H$ of minimal modulus is a positive real number.

Exercise: Prove the Vivanti-Pringsheim Theorem by considering what happens to $|F(z)|$ as you approach a root of $H$ with minimal modulus.

The Vivanti-Pringsheim Theorem implies that to find the minimal roots of a rational generating function (which has non-negative coefficients corresponding to the counting sequence of a combinatorial class) we can search along the positive real line to find the smallest positive real root, then determine any other roots with the same modulus.

Example: Recall the look-and-say digit sequence $d_n$ with generating function $$ D(z) = \frac{G(z)}{H(z)} = \frac{1+z+\cdots+18z^{77}-12z^{78}}{1-z+\cdots-9z^{71}+6z^{72}}$$ introduced above. Using a computer algebra system and the Vivanti-Pringsheim Theorem, it is possible to prove that $H(z)$ has a positive real root $\lambda=0.7671\dots,$ all other roots of $H$ have larger modulus than $\lambda,$ and $H^\prime(\lambda),G(\lambda)\neq0.$ Thus, even though asymptotics of $d_n$ involve degree 72 algebraic numbers, we can still use implicit algebraic calculations to prove that $$ d_n \sim \frac{-G(\lambda)}{\lambda H^\prime(\lambda)} \approx (2.04\dots)(1.303\dots)^n.$$

Skolem’s Problem and $\mathbb{N}$-Rational Functions #

When the denominator $H$ has multiple roots with minimal modulus and the same multiplicity then there can be cancellation which makes it difficult to determine dominant asymptotic behaviour.

Example: The coefficients $$[z^n] \left( \frac{1}{1-4z^2} + \frac{1}{1-z/2}\right)= \begin{cases} 2^n + 2^{-n} &:\text{ $n$ even} \\[+2mm] 2^{-n} &:\text{ $n$ odd} \\[+2mm] \end{cases} $$ grow to infinity like $2^n$ when $n$ is even, and decay to zero like $2^{-n}$ when $n$ is odd. The denominator of this rational function has roots $\{1/2,-1/2,2\}$ and the contributions from the roots $1/2$ and $-1/2$ cancel when $n$ is odd.

Due to such pathological behaviour, even though there is an explicit formula for the $n$th term of a C-finite sequence, there are still many basic open problems.

Open Problem (Skolem’s Problem): Is there an algorithm that takes a rational function with integer coefficients and decides whether it has a power series coefficient equal to zero? Equivalently, can it be detected if a C-finite sequence contains a zero term.

In 1934, Skolem proved that the indices of zero terms in a C-finite sequence of rational numbers form a finite set together with a finite set of arithmetic progressions. Unfortunately, all proofs of Skolem’s theorem use $p$-adic analysis in a way which does not allow them to classify when such zeroes exist in general. Skolem’s Problem is known to be decidable for recurrences of orders at most 4, however it is still open for recurrences of order 5.

If $(f_n)$ is C-finite then the C-Finite Coefficient Theorem implies that $(f_n^2)$ is too. Thus, checking for zeroes can be reduced to detecting whether all terms in a C-finite sequence are positive. This can be further relaxed to give the following open problem.

Open Problem (Ultimate Positivity): Is there an algorithm that takes a rational function with integer coefficients and decides whether its power series coefficients are eventually positive? Equivalently, can it be detected if the terms in a C-finite sequence are eventually positive.

This makes things seem bleak: it’s hard to imagine determining asymptotics when decidability of such basic properties are unknown, however the extremely pathological behaviour that lead to such problems essentially never arises for combinatorial problems.

To be more precise, we define the set of $\mathbb{N}$-rational functions to be the smallest set $R$ of rational functions containing $1$ and the variable $z$ such that whenever $f,g \in R$ then the sum $f+g,$ the product $fg,$ and the pseudo-inverse $1/(1-zf)$ all lie in $R.$ The class of $\mathbb{N}$-rational functions thus represents all generating functions of classes with iterative specifications using only the neutral and atomic classes, and combinatorial product, sum, and sequence operations.

In theoretical computer science, $\mathbb{N}$-rational functions arise as the generating functions enumerating strings in regular languages (equivalent to classes of strings accepted by finite automata, an important computational model). It is a “meta-principle” in combinatorics that the generating functions of natural combinatorial classes are $\mathbb{N}$-rational. For instance, in the proceedings for the 2006 International Congress of Mathematics, Bousquet-Mélou wrote that she “never met a counting problem that would yield a rational, but not $\mathbb{N}$-rational, GF.” In his 2005 Masters thesis, Koutschan tested “about 60” sequences with rational generating functions algorithmically and found all of them to be $\mathbb{N}$-rational.

For us, the most important result about such functions is that the minimal roots of their denominators have nice behaviour.

Theorem (Berstel): If $F(z) = G(z)/H(z)$ is an $\mathbb{N}$-rational function and $\lambda$ is a root of $H$ with $|\lambda|$ minimal then there exists a positive integer $r$ such that $\lambda^r = |\lambda|^r.$

The theorem of Berstel implies that the coefficients of $\mathbb{N}$-rational functions have predictable periodic behaviour, so their asymptotic behaviour can be nicely characterized. Thus, using algorithms for manipulating algebraic numbers, it is essentially automatic to go from a C-finite recurrence defining a combinatorial sequence of interest to dominant asymptotic behaviour of the sequence.

Note: Although every $\mathbb{N}$-rational function is a rational function with natural number coefficients, there are rational functions with natural number coefficients that are not $\mathbb{N}$-rational. For instance, if $$ F(z) = \frac{2(1+3z)^2}{(1-9z)(1+14z+81z^2)}$$ then it can be shown that $F$ has natural number coefficients, but its denominator has roots whose integer powers never become positive and real. Thus, $F$ is not an $\mathbb{N}$-rational function by Berstel’s theorem. Soittola gave an algorithm to test whether a rational function is $\mathbb{N}$-rational.

Classifying C-Finite Sequences #

We can also use the results above to prove non-C-finiteness and irrationality.

Example: Prove the Catalan number sequence $c_n = \frac{1}{n+1}\binom{2n}{n}$ is not C-finite.

Click for Answer

The Catalan generating function $C(z) = \frac{1-\sqrt{1-4z}}{2z}$ is not rational, so $(c_n)$ is not C-finite.

Such results have further implications: for instance, the counting sequence of any language counted by a so-called finite automaton in theoretical computer science is known to be C-finite, so the last example implies that there is no finite automaton recognizing binary trees (which are counted by the Catalan numbers).

Example: Prove the series $$F(z) = \sum_{n \geq 1}\frac{z^n}{n^5}$$ is irrational.

Click for Answer

The sequence $(f_n)$ with $f_n = n^{-5}$ is not C-finite, because it does not satisfy the conditions of the C-finite asymptotic theorem (since $f_n$ has a negative power of $n$), so its generating function $F(z)$ is not rational.

Note that it has been an open problem for hundreds of years whether or not the constant $$\zeta(5) := F(1) = \sum_{n \geq 1} \frac{1}{n^5}$$ is rational. The extra structure present for generating functions, such as their coefficient behaviour, can be useful in proving irrationality. Asymptotic behaviour is also a useful classifier of sequence and generating function behaviour.

Example: Let $p_n$ be the $n$th prime number. Prove that the sequence $(p_n)$ is not C-finite.

Click for Answer

The prime number theorem implies $p_n = n\log n + n \log n \log\log n + O(n)$ and this asymptotic behaviour is not possible for C-finite sequences.

This example shows that it is not possible to generate the prime numbers using a linear recurrence with constant coefficients – in fact, the presence of a $\log \log n$ term in the asymptotics of $p_n$ implies that the prime numbers are not generated by any linear recurrence with polynomial coefficients.

Computing Terms in C-Finite Sequences #

Suppose $(f_n)$ satisfies the recurrence relation $$ \qquad f_n + c_1f_{n-1} + \cdots + c_r f_{n-r} = 0 $$ for all $n \geq r.$ How fast can we compute $f_N$ as a function of $N$?

The trick to computing $f_N$ efficiently is to express this recurrence relation in a way that allows us to compute a specific term without computing all previous terms. This is most easily accomplished using linear algebra (although other approaches are possible).

In particular, if $(f_n)$ satisfies the recurrence relation above then $$ \underbrace{ \begin{pmatrix} -c_1 & -c_2 & c_3 & \cdots & -c_r \\ 1 & 0 & 0 & \cdots & 0 \\ 0 & 1 & 0 & \cdots & 0 \\ \vdots & \ddots & \ddots & \ddots & \vdots \\ 0 & 0 & \cdots & 1 & 0 \end{pmatrix} }_M \; \underbrace{\begin{pmatrix} f_{r-1} \\ f_{r-2} \\ f_{r-3} \\ \vdots \\ f_0 \end{pmatrix}}_\mathbf{v} =\begin{pmatrix} f_{r} \\ f_{r-1} \\ f_{r-2} \\ \vdots \\ f_1 \end{pmatrix}. $$ Repeatedly multiplying by the matrix $M$ implies that $f_N$ is the final entry of the matrix-vector product $M^N\mathbf{v}.$ Since the entries of $\mathbf{v}$ are defined in terms of the initial conditions of the recurrence for $(f_n),$ to compute $f_N$ it is thus sufficient to compute the matrix power $M^N.$

Instead of computing $M^N$ through $N$ matrix multiplications $M^N = M \cdot M \cdot M \cdots M,$ we can be more efficient using binary powering.

BinaryPower(n):

If n=1 then return M

Else if n is even then return BinaryPower(n/2)^2

Else return M * BinaryPower((n-1)/2)^2

Example: If $(f_n)$ is the Virahanka-Fibonacci sequence satisfying $f_n -f_{n-1}-f_{n-2}=0$ for all $n\geq 2$ with $f_0=f_1=1$ then $$M = \begin{pmatrix} 1 & 1 \\1 & 0\end{pmatrix}$$ and terms in the sequence are computed by the following Sage function.

M = Matrix(ZZ,2,2,[1,1,1,0]) # matrix with rows [1,1] and [1,0] def bin_pow(n): if n == 1: return M elif n%2 == 0: return bin_pow(n/2)^2 else: return bin_pow((n-1)/2)^2*M def fib(n): return add(bin_pow(n)[1]) timeit('fib(10^6)') # compute v_(10^6)Running this on a 2020 Macbook Pro gives the output

Out: 25 loops, best of 3: 10.1 ms per loopwhich implies that we can compute the millionth Fibonacci number (having more than 200,000 decimal digits) in around 10 milliseconds.

The fact that this algorithm is so fast comes from a complexity analysis of binary powering.

Lemma: Computing $M^N$ using theBinaryPowerfunction uses $O(\log N)$ additions and multiplications of constants.

Proof Sketch

Note: Counting the number of additions and multiplications performed – known as the arithmetic complexity model – is not the most accurate measure of complexity, because it treats the cost to multiply two single digit numbers the same as the cost to multiply two 100 digit numbers. More realistic is the bit complexity model where the number of additions and multiplications of fixed-size integers is tracked. A detailed analysis proves that the $N$th term in a C-finite sequence can be computed in $O(N \log^2 N)$ additions and multiplications of fixed-size integers, and this linear complexity is observed in practice.

An efficient method for computing all terms $f_0,\dots,f_N$ relies on computing the first $N+1$ series coefficients of the rational generating function of $(f_n)$ using a Newton iteration-like algorithm beyond the scope of these notes.

Additional Problems #

(Problem 1) A ternary string is a sequence of 0s, 1s and 2s. Let $a_n$ be the number of ternary strings of length $n$ with no two consecutive 0s.

(a) Prove that $a_n = 2a_{n-1} + 2a_{n-2}$ for all $n\ge 2.$

(b) Determine an explicit formula for $a_n$ for all $n\ge 0.$

Problem 2 Consider the recurrence relation $$2a_{n + 2} = 5a_{n+1} - 2a_n \qquad \text{for all $n \geq 0$}$$ with the initial conditions $a_0=A$ and $a_1=B$ for constants $A$ and $B.$ Find a closed form for $a_n$ in terms of the constants $A$ and $B.$ What are the possible asymptotic behaviours that $a_n$ can have, depending on the choices of $A$ and $B$? If we randomly selected $A$ and $B,$ what asymptotic behaviour would we expect to see?

(Problem 3) Fix positive integers $a_1,\dots,a_r$ with greatest common divisor 1 (i.e., no integer greater than 1 divides all $a_i$). Let $s_n$ be the number of solutions to the equation $$ n = a_1z_1 + \cdots + a_rz_r \;\;\text{ with }\;\; z_k \in \mathbb{N} \;\;\text{ for all }\;\; 1 \leq k \leq r. $$ (a) Prove that $(s_n)$ has generating function $$ S(x) = \frac{1}{(1-x^{a_1})(1-x^{a_2})\cdots(1-x^{a_r})}. $$

(b) Partial fraction decomposition implies that we can write $$ S(x) = \frac{C}{(1-x)^r} + \frac{P(x)}{Q(x)} $$ for some constant $C$ and polynomials $P$ and $Q$ such that $(1-x)^r$ does not divide $Q.$ Find $C.$ Hint: If $k$ is a positive integer then $1-x^k$ has a factorization that you might find useful.

(c) Find asymptotics for $s_n.$ Make sure you justify which roots of the denominator contribute to your asymptotic formula.

(Problem 4) Let $A=\{a,b,c,\dots,z\}$ be the set containing the 26 letters of the English alphabet. In this question we use rational generating functions to explain occurrences of arbitrary patterns in large bodies of text. An occurrence of a word in a string is a sequence of the letters in the word in order but not necessarily consecutive. For instance, the string $$ \begin{aligned} &\text{Therefore, since {\color{red}{\textbf{br}}}ev{\color{red}\textbf{i}}ty is th{\color{red}\textbf{e}} soul o{\color{red}\textbf{f}} wit,} \end{aligned} $$ contain an occurrence of $\color{red}\textbf{brief}$ under these rules (spaces are included for readability, but we’re really only interested in the letters).

We consider each of the letters $a,b,\dots,z$ to be atomic elements (of size 1). Let $A_a = A \setminus \{a\}$ be the class of all letters except for $a,$ and define $A_b,A_c,\dots,A_z$ similarly. Finally, if $C$ is a class with no objects of size zero we write $C^*=\text{SEQ}(C)$ (this is common notation for sequences of strings).

(a) Explain why the class $S$ of strings defined by

$$

\begin{aligned}

A_w^* &\times \{w\} \times A_a^* \times \{a\} \times A_t^* \times \{t\} \times A_e^* \times \{e\} \times A_r^* \times \{r\} \\

&\times A_l^* \times \{l\} \times A_o^* \times \{o\} \times A_o^* \times \{o\} \times A^*

\end{aligned}

$$

contains all strings on the letters ${a,b,\dots,z}$ with at least one occurrence of the word waterloo (with letters not necessarily consecutive) and show that the generating function of this class is

$$ S(x) = \frac{x^8}{(1-25x)^8(1-26x)}.$$

(b) The probability that a string of length $n$ on the letters ${a,b,\dots,z}$ contains at least one copy of the word \textsc{waterloo} is $\frac{[x^n]S(x)}{26^n}.$ Prove that as $n$ goes to infinity this probability approaches one (so any large enough random string should contain waterloo).

(c) Let $T$ be the class of strings defined by

$$

\begin{aligned}

A^* &\times \{w\} \times A^* \times \{a\} \times A^* \times \{t\} \times A^* \times \{e\} \times A^* \times \{r\} \\

&\times A^* \times \{l\} \times A^* \times \{o\} \times A^* \times \{o\} \times A^*.

\end{aligned}

$$

Explain why the expected (average) number of occurrences of waterloo in a text with $n$ letters is $\frac{[x^n]T(x)}{26^n}.$

(d) The text of Hamlet contains 132,680 alphabetical letters: assuming the distribution of letters in Hamlet is the same as as a random string of this length, how many occurrences of waterloo would we expect to find in Hamlet? (Your answer should be a simple exact expression potentially involving powers and binomial coefficients)

(e) In a more general setting, find a formula for the expected number of occurrences of a word of length $m$ in a string of length $n$ over an alphabet with $a$ letters. (In part (d) we have $m=8$ for the length of Waterloo and $a=26$ for the number of letters in the English alphabet).

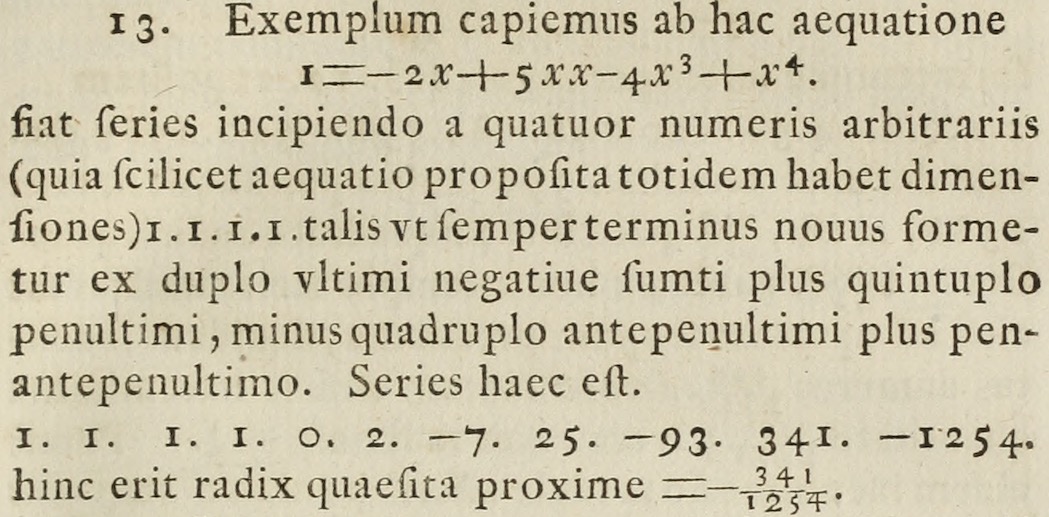

(Problem 5) Figure 1 shows a partial scan of a 1728 paper by Daniel Bernoulli. This section shows Bernoulli trying to approximate a root to the polynomial equation $1=-2x+5x^2-4x^3+x^4$ by forming a sequence beginning $1,1,1,1,0,2,-7,25,\dots$ and taking the ratio $-341/1254$ of consecutive terms. Bernoulli’s method is fairly accurate: running

(-1-2*x+5*x^2-4*x^3+x^4).roots(x,ring=RR,multiplicities=False)

in Sage gives the solutions

Out: [-0.272019649514069, 2.27201964951407]

while $-341/1243 = -0.27192\dots$ (so we get almost 3 digits of a solution from only 10 terms of the sequence).

(a) Explain how Bernoulli constructed this sequence, and why its ratio of terms approaches a root of $1=-2x+5x^2-4x^3+x^4.$ You do not need to give a fully rigorous proof, but should clearly explain the reasoning behind why this works (for instance, you can show why it works assuming some properties of the roots of $1=-2x+5x^2-4x^3+x^4$ that you clearly state but do not need to prove).

(b) The value $x=-0.27201\dots$ appears because it is the root of $1=-2x+5x^2-4x^3+x^4$ with smallest modulus (this fact is also a hint to part a). Modify this method to approximate the root $x=2.27\dots$ of $1=-2x+5x^2-4x^3+x^4$ that has largest modulus by taking a ratio of terms in a sequence satisfying a linear recurrence. Again, you may clearly state properties of the roots of $1=-2x+5x^2-4x^3+x^4$ that you assume but do not need to prove.

(You only need to describe the method, but I suggest you actually compute terms in your sequence and use this to compute an approximation to the largest root – if you do everything by hand, including long division of the final fraction, then you can imagine you are solving polynomials 300 years ago! You will probably also get a better appreciation for computer algebra systems.)

(c) Let $p(x) \in \R[x]$ be any polynomial with a unique root $w \neq 0$ of minimal modulus. Describe how to numerically approximate $w$ by taking a ratio of terms in a sequence satisfying a linear recurrence (mention whether or not you require any assumptions when picking the initial conditions of your recurrence). If you were to run this algorithm on actual examples you would see that the ratios of sequence terms converge to $w$ much faster when $w$ is a simple root of $p(x)$ (this is why Bernoulli’s method works so well on his example) – give a brief explanation of why this is true.